In Latest Shot At Nvidia, Google Unveils Two Chips For The Agentic Era

In a blog post titled “Our Eighth Generation TPUs: Two Chips for the Agentic Era,” Google Senior Vice President and Chief Technologist for AI and Infrastructure Amin Vahdat unveiled the latest generation of the company’s in-house AI chips, splitting the lineup into two versions.

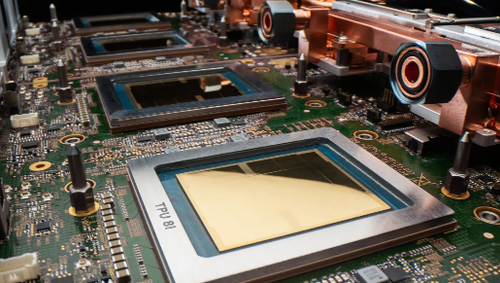

The TPU 8t is designed for training AI models, while the TPU 8i is built for inference, or running AI services once they are developed and deployed, taking direct aim at Nvidia.

“The culmination of a decade of development, TPU 8t and TPU 8i are custom-engineered to power the next generation of supercomputing with efficiency and scale,” Vahdat wrote in the blog post.

He continued:

Hardware development cycles are much longer than software. With each generation of TPUs, we need to consider what technologies and demands will exist by the time they are brought to market.

Several years ago, we anticipated rising demand for inference from customers as frontier AI models are deployed in production and at scale.

And with the rise of AI agents, we determined the community would benefit from chips individually specialized to the needs of training and serving.

Vahdat pointed out that the new chips store more data directly on the processor, helping reduce delays and improve responsiveness, especially for more complex AI models that reason through tasks in steps. He also emphasized efficiency, saying the TPU 8t delivers 124% more performance per watt than the prior generation, while the TPU 8i improves that metric by 117% – this is important since the power bill crisis has swayed local politicians in many states and become a major roadblock in data center buildouts.

The announcement highlights Google’s move to become a leader in producing in-house AI chips despite Nvidia’s continued dominance. At the same time, Google said it will continue offering Nvidia-based systems to customers.

The broader message is that Google is trying to strengthen its AI infrastructure advantage by tailoring its chips more precisely for the distinct demands of training and inference.

Also, across the industry, Microsoft, Meta, Amazon, Apple, and others are pursuing custom AI chips for specialized workloads.

Shares of Google were modestly higher in premarket trading after the blog post, rising about 1.5%. Even so, the stock has traded sideways this year.

TPUs have powered Google’s Gemini models for years, and the latest announcement signals a push to deliver greater scale, efficiency, and responsiveness across both training and serving workloads.

Tyler Durden

Wed, 04/22/2026 – 09:00